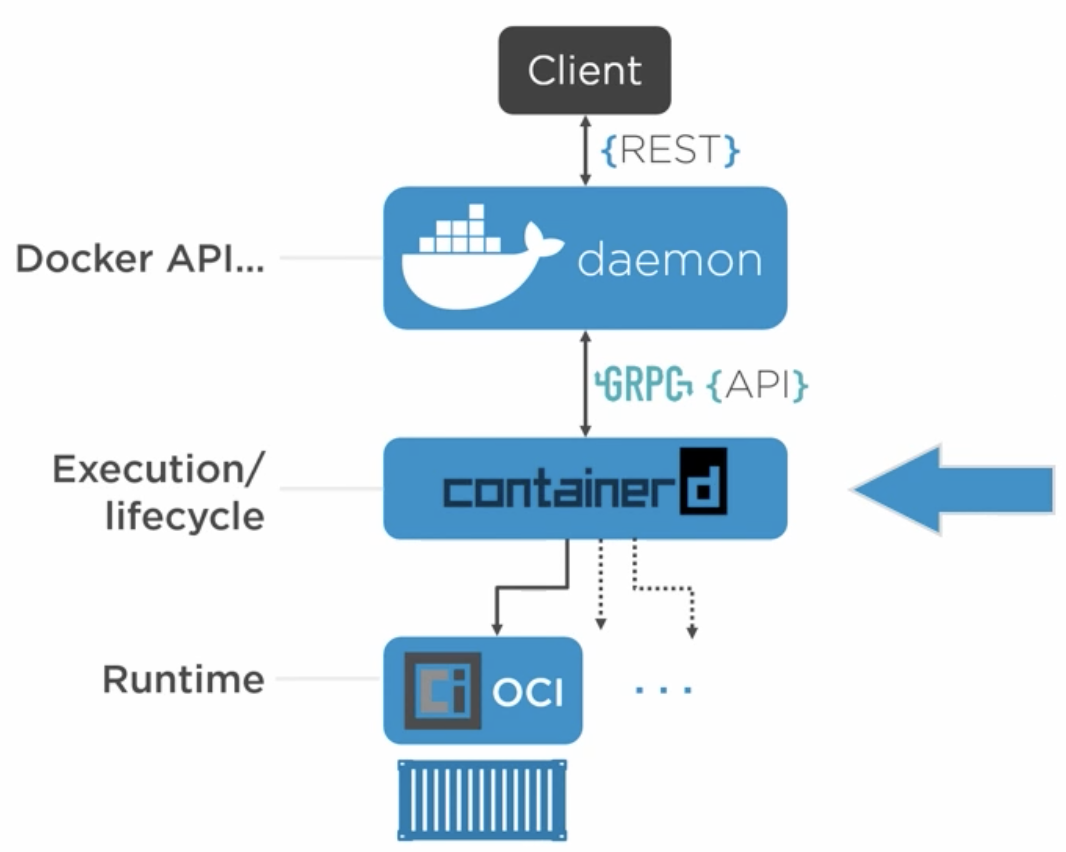

Multiple Spot SDK examples support dockerization and running as docker containers. To manage the docker images on any other compute payload, please refer to the Command-line Configuration section. Running the dockerized application on a computation payload attached to Spot removes the need for WiFi connectivity between Spot and a stationary computation environment, improving Spot’s autonomy. If targetting the CORE I/O payload, combine all docker containers with a docker-compose configuration file and package everything into a Spot Extension, as described in the Manage Payload Software in CORE I/O section.ĭeploy and manage the Spot Extension in the CORE I/O payload (also described in the section linked above). The section Create Docker Images described how to create and test the docker images in the local development environment. This step verifies that the application works correctly in an containerized environment, and it acts as a stepping stone to the final step below. The change in this step compared to the previous one is to run the application inside a docker container, rather than on the host OS of the development environment. Testing in the development environment allows for quick iterations and updating of the application code.ĭockerize the application and test the docker image with the application on the development environment. In this case, the development computer connected to Spot’s WiFi acts as the computation payload for the robot.

Implement and test application in a development environment, such as a development computer. The suggested approach to develop, test and deploy software on computation payloads is as follows: For CORE I/O, we suggest using the provided Extensions framework to install, configure and run custom software. The purpose of computation payloads is to run custom software applications on the robot that interact with the robot software system and the other payloads attached to Spot. Most instructions describe steps on how to manage custom software on the CORE I/O, but these steps are applicable to other computation payloads as well. This document describes how to configure software to run on these computation payloads. Boston Dynamics offers CORE I/O computation payloads, but users can attach other types of computation payloads as well. Note: we need to use only one workaround.Spot’s computing power can be extended through additional computation payloads mounted on the robot. RUN cd /root/AgentDep2 & tar -xf tomcat_appllication_agent_v1. #Or use RUN command like below workaround 2ĬOPY tomcat_appllication_agent_v1. #extract agent tar locally and then copy workaround 1 I have attached the sample Dockerfile, with the above two steps which explain the workaround. COPY the image to the desired directly then USE the RUN command. Untarring the package locally and then use COPY command.Ģ. We have couple of workaround if some other customer may have same issue with their image.ġ. So which i think could be docker image issue. The only difference that is, the OpenJDK image that customer is using from their internal registry. So I tried creating the build with the same openjdk image from docker hub and tried to reproduce it, but i can't reproduce there. The image the customer is using is openjdk-11-slim.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed